The hallucination index 2026: How often is your AI making things up?

Updated| March 16, 2026

Compare the 2026 hallucination rates for ChatGPT, Claude, and Gemini. Discover why "thinking" models lie more and how to spot errors on Eye2.AI.

TL;DR: In 2026, AI models are smarter than ever, but they haven't stopped lying. While some "grounded" models have dropped their error rates below 1% for simple tasks, newer reasoning models can hallucinate up to 33% to 51% of the time on open-ended factual benchmarks. Eye2.AI is your defense against these fabrications, using multi-model consensus to flag lies before you believe them.

Table of Contents

Are AI hallucinations getting better or worse in 2026?

The 2026 hallucination leaderboard

Why do "thinking" models hallucinate more?

How Eye2.AI catches what a single AI misses

FAQs

Are AI hallucinations getting better or worse in 2026?

The data for 2026 reveals a "situational risk".

The Good News: On "grounded" tasks (like summarizing a provided document), hallucination rates have plummeted. Top-tier models like Gemini 2.0 Flash and ChatGPT-o3 mini now boast factual consistency scores as high as 99.2% (a hallucination rate of only 0.7% to 0.8%).

The Bad News: On open-ended "General Knowledge" tasks, the numbers are grim. In domain-specific evaluations (such as medical or technical analysis), hallucination rates frequently remain between 10% and 20%.

The 2026 hallucination leaderboard

According to the latest Vectara HHEM Leaderboard and independent factuality benchmarks, here is how the heavy hitters rank on factual consistency as of early 2026:

AI Model Family | Hallucination Rate (Summarization) | SimpleQA Verified Score (Factuality) |

Gemini 2.5 Pro | ~1.1% | 55.6% (Top Score) |

GPT-5 | ~1.4% | 52.3% |

Claude 4 Opus | ~1.8% | 50.0% |

ChatGPT-o3 mini | 0.79% (Best in Class) | N/A |

Grok-3 Search | ~5.8%* | 94% Error Rate (News Identification) |

*Grok-3 results vary significantly based on the specific benchmark; while reasoning is high, news-source identification remains a struggle.

Why do "thinking" models hallucinate more?

One of the most shocking findings of 2026 is the reasoning paradox. Models optimized for "deep thinking" (like the o3 series) often have higher hallucination rates on short, factual questions.

The Stat: Newer reasoning-focused models have experienced hallucination rates of 33% to 51% on factual benchmarks like SimpleQA and PersonQA.

Why? These models are trained to produce the most statistically likely answer rather than assess their own confidence. This leads them to "reason" a plausible-sounding fabrication into existence instead of admitting "I don't know".

How Eye2.AI catches what a single AI misses

You shouldn't have to guess if your AI is lying. Eye2.AI builds a structural solution to the problem by automating the "jury of AIs" approach.

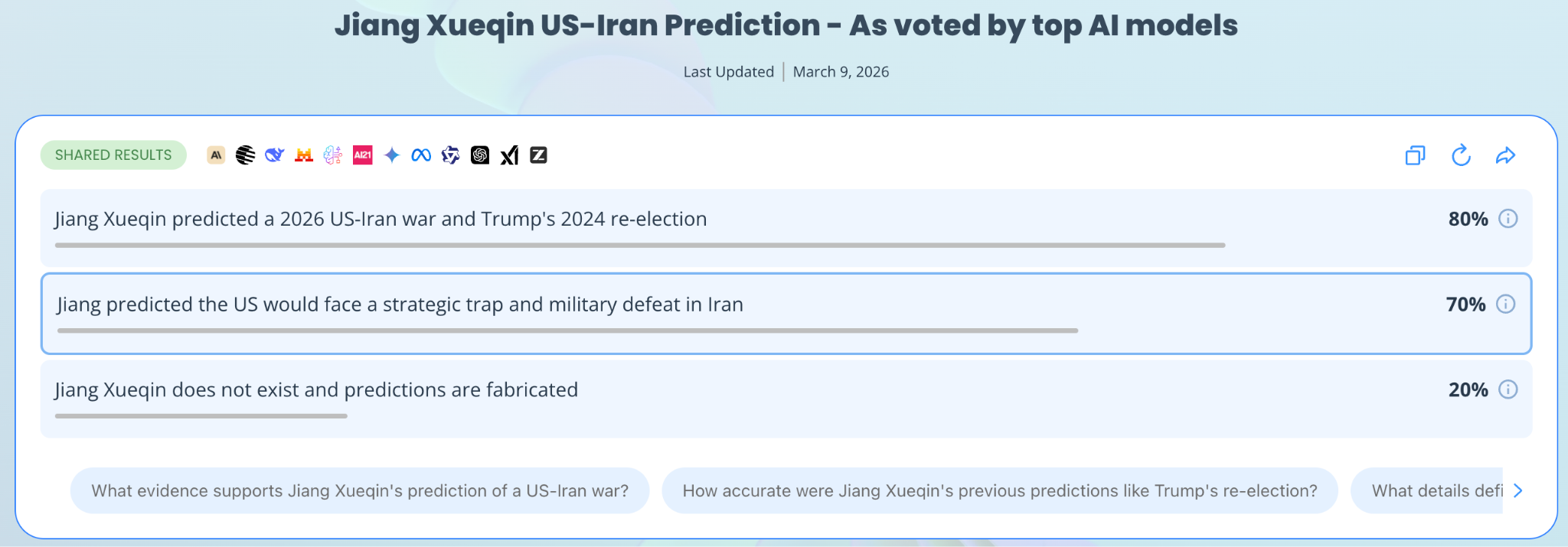

The Consensus Effect: When you see a 90% agreement across ChatGPT, Claude, and Gemini on Eye2.AI, you’ve found the "truth layer." If only 14% of models agree on a fact (like a specific citation), it is a high-probability hallucination.

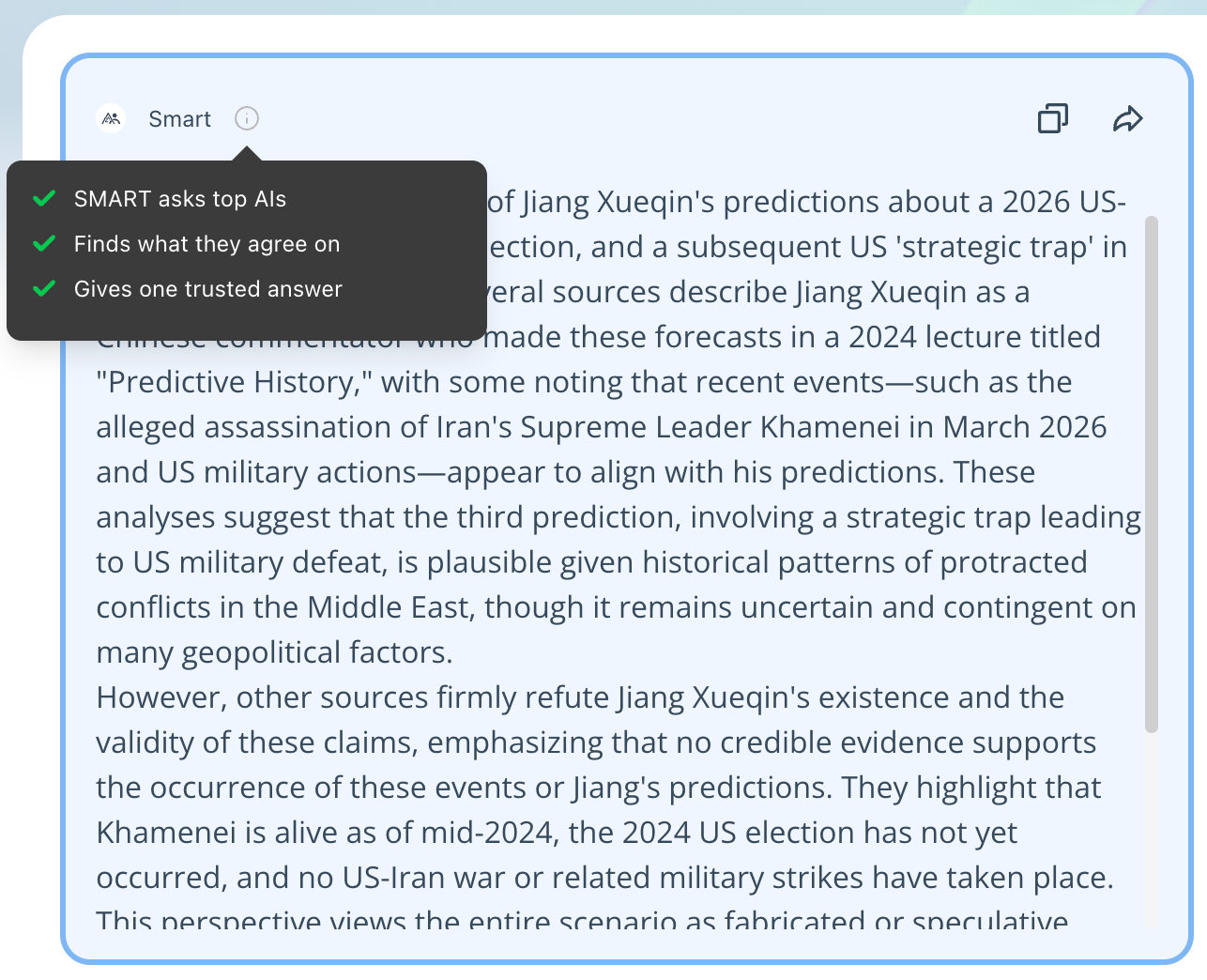

SMART Consensus: Use Eye2.AI's SMART to compare answers and extract only what the models agree on, effectively filtering out the single-model bluffs that lead to costly legal and professional errors.

Shared Percentages: Every shared result on Eye2.AI includes a transparency percentage, showing you exactly how many models back each claim.

FAQs

1. Which AI hallucinates the least in 2026?

For summarizing documents, ChatGPT-o3 mini and Gemini 2.0 Flash currently hold the lowest rates at roughly 0.7% to 0.8%.

2. Can RAG (Retrieval Augmented Generation) stop hallucinations?

RAG can reduce hallucinations by 40% to 71% by forcing models to ground their answers in external documents. However, errors still persist even when the model is given the source.

3. Why are hallucination rates so high for Grok?

While Grok performs well on reasoning benchmarks, it has shown an overall hallucination rate as high as 94% when specifically asked to identify and cite news sources.

By using Eye2.ai, you agree to the Terms and Privacy Policy. Outputs may contain errors.