We asked 4 AIs to predict the Golden Globes. Here’s what happened.

Updated| January 16, 2026

How accurate were the AI predictions for the 2026 Golden Globes? We compare the Eye2.AI consensus forecast against the real nominations. See the results.

TL;DR: The Golden Globe nominations just dropped last December 8. But on November 28, Eye2.AI already had the list. We didn't just ask one AI. We asked Gemini, ChatGPT, Claude, and Mistral to agree on the nominees. Did the consensus beat the "experts"? Here is the scorecard.

Table of Contents

Which movies did the AI predict perfectly?

Where did the AI get the category wrong?

What was the final accuracy score?

FAQs

Which movies did the AI predict perfectly?

When the AIs agreed, they were spot on.

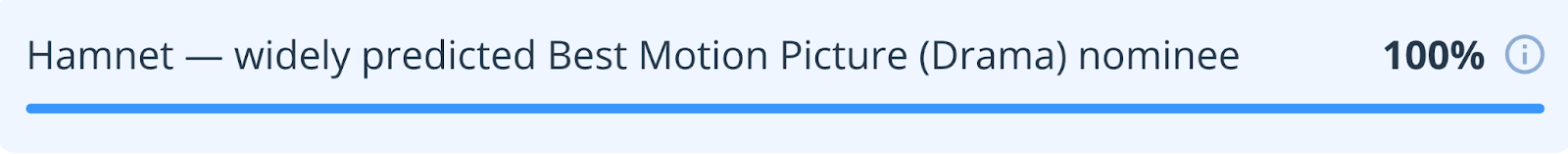

Hamnet: The consensus was 100% sure this would be nominated for Best Drama. Result? Correct.

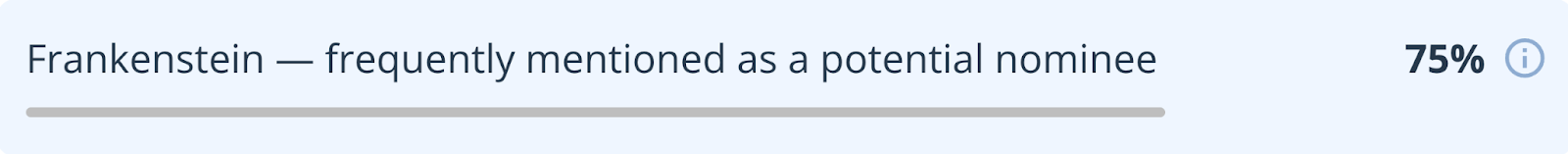

Frankenstein: The models had 75% confidence in Guillermo del Toro’s movie. Result? Correct.

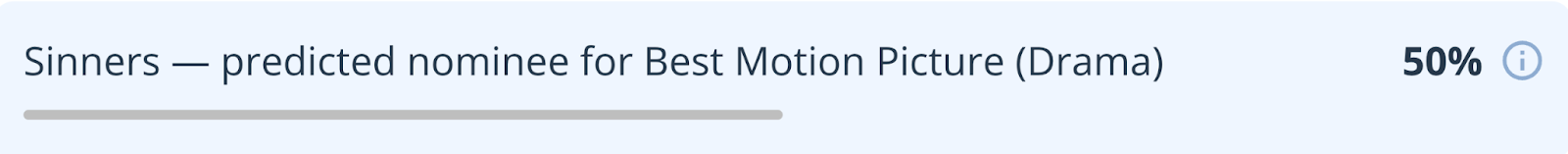

Sinners: This was a toss-up (50% confidence), but the AIs kept it on the list. Result? Correct.

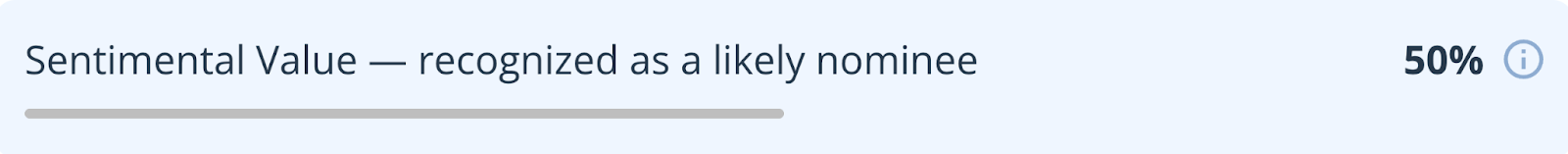

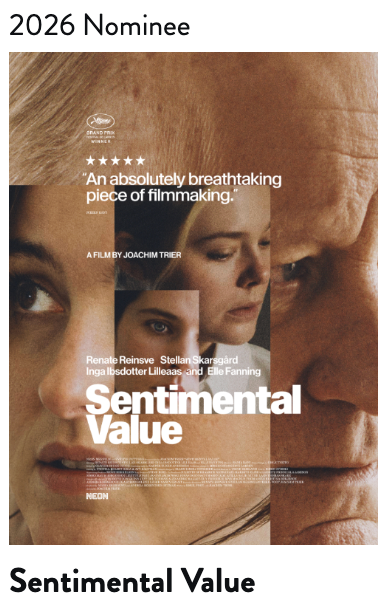

Sentimental Value: A risky pick (50% confidence), but the consensus held firm. Result? Correct.

Where did the AI get the category wrong?

The AIs knew these movies would be nominated, but got confused by the category rules.

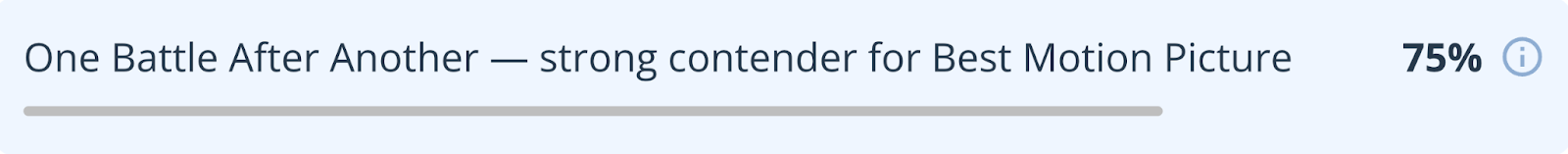

One Battle After Another:

Prediction: Best Drama.

Reality: Best Musical or Comedy.

What happened: The AIs knew it was a top contender (75% confidence), but the Golden Globes put it in the Comedy category.

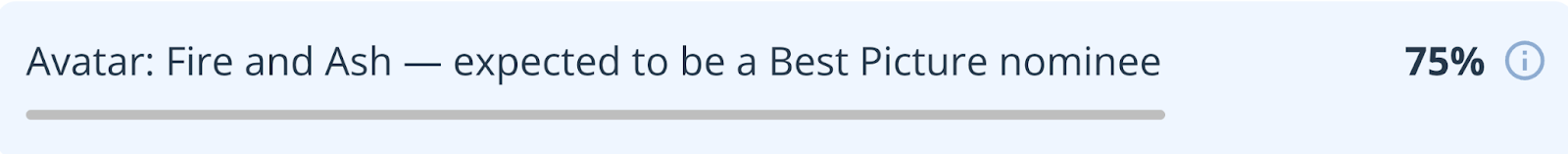

Avatar: Fire and Ash:

Prediction: Best Drama.

Reality: Cinematic and Box Office Achievement.

What happened: The AIs expected it to compete for the main drama prize, but the voters put it in the "Blockbuster" category instead.

What was the final accuracy score?

Movies Correctly Identified: 6 out of 6 (100%)

Categories Correctly Predicted: 4 out of 6 (66%)

The Takeaway: Prediction markets guess. Pundits argue. But when the AIs agree, they find the signal in the noise.

FAQs

1. How does Eye2.AI predict awards like the Golden Globes?

Eye2.AI uses a "consensus" method by analyzing data from multiple leading models (including Gemini, ChatGPT, Claude, and Mistral) rather than relying on a single source. This approach identifies a "signal" by seeing which nominees are consistently predicted across different AI systems.

2. When did Eye2.AI release its 2026 Golden Globe predictions?

The consensus predictions were published on November 28, nearly two weeks before the official nominations were announced on December 8.

3. How accurate were the AI predictions for the 2026 nominations?

The AI consensus correctly identified 100% of the movies on its list (6 out of 6). However, its categorical accuracy was lower at 66% (4 out of 6) because it misidentified which specific genre categories certain films would be placed in by the voters.

4. Why did the AI miss the specific categories for some films?

The AI often predicts based on standard genre definitions (Drama vs. Comedy), but awards bodies like the Golden Globes occasionally use different rules. For example, the AI predicted One Battle After Another for Best Drama, but it was nominated in the Musical or Comedy category.

5. Which 2026 films were predicted with the highest confidence?

The movie Hamnet was predicted with 100% confidence by the consensus and was successfully nominated for Best Drama. Frankenstein followed with 75% confidence and also secured a nomination.

6. Is AI better than human experts at awards forecasting?

While pundits often disagree, the Eye2.AI scorecard showed that when multiple AI models reach a high-confidence consensus, they can be remarkably accurate at identifying which films have the necessary "buzz" to secure a nomination.

By using Eye2.ai, you agree to the Terms and Privacy Policy. Outputs may contain errors.